Commentary by Internet Ethics Program Director Irina Raicu and colleagues

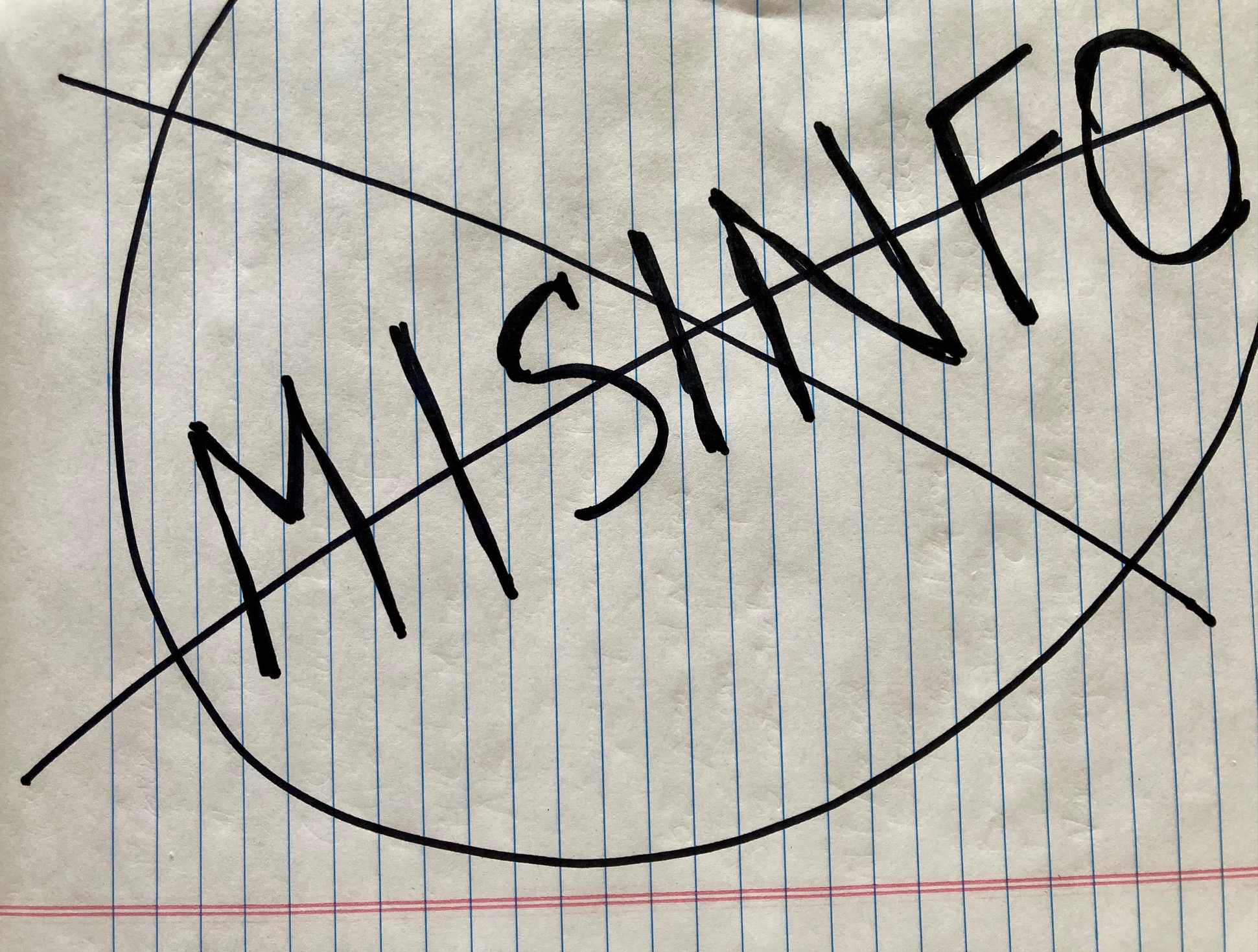

Renewing a call for careful social media sharing

On navigating the turbulent flows of deepfakes, data voids, and availability cascades.

On the "solutionless dilemma" of users being misled by chatbots

Too many users don't realize that chatbots sound certain but shouldn’t; is that a design flaw?

Questions Left Unanswered at Another Hearing Lambasting Social Media CEOs

What if companies were to invest significantly more in content moderation?

With apologies to Mary Oliver’s “Wild Geese”

When social media calls to you, harsh and exciting

Caring for the whole person in the time of chatbots

A snapshot, one year after the public release of ChatGPT

Generating Awareness

We need to be discerning about AI usage--and understand its environmental cost.

A Recent Conversation with Three Experts

Video of our recent panel discussion is now available.

What is the effect of calling a product "experimental" and deploying it?

We need more innovation and disruption—from different constituencies.

There is a lot of work to be done, by all of us who are impacted by AI tools.

- More pages: