Deep Learning Approaches for Video Prediction

Nam Ling, Ying Liu, and Mareeta Mathai, Computer Science and Engineering

The task of video prediction is to generate unseen future video frames based on the past ones. It is an emerging, yet challenging task due to its inherent uncertainty and complex spatiotemporal dynamics. The ability to predict and anticipate future events from video prediction has applications in various prediction systems like self-driving cars, weather forecasting, traffic flow prediction, video compression etc. Due to the success of deep learning in the computer vision field, many deep learning AI architectures like convolutional neural networks(CNNs), long short-term memory (LSTMs), convolutional LSTMS (ConvLSTMs) have been explored to improve prediction accuracy. The internal representation, mainly the spatial correlations and temporal dynamics of the video, is learned and used to predict the next frames in deep learning-based video prediction.

In the light of Green AI which aims for efficient environment friendly solutions alongside accuracy, we concentrate on lightweight methods for video prediction. Such methods are suitable for memory-constrained and computation resource-limited platforms, such as mobile and embedded devices. Transformers are the latest innovative idea in the deep learning field. It has proved to be successful in the natural language processing (NLP) domain and is currently being explored in the computer vision field as well. It can efficiently capture long-term dependencies in video and has much faster training time due to its parallelizable mechanism, unlike LSTM-based models. We focus on transformer-based architecture incorporated with CNN/LSTM methods with fewer parameters for our lightweight video prediction technique. WAVE facilities have helped to develop deep learning algorithms and have enormously aided in accelerating our experiments.

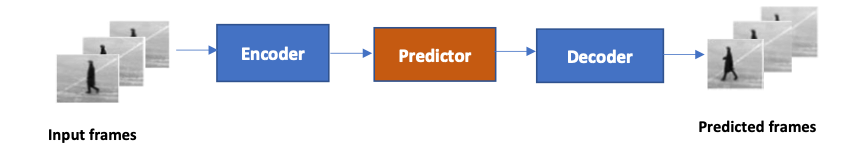

Figure 1. The architecture of the proposed 3D prediction network. Three input frames at time steps t, t+1, t+2 are fed to the network and the network predicts frames at time steps t+1, t+2 and t+3.