The WAVE HPC Center and the WAVE+Imaginarium Lab are used by faculty, staff, and students to conduct research and develop course curriculum within the university. Below are examples of the various research projects that have used and benefited from the WAVE facilities.

Reconstructing Two-Level Fluctuators Using Qubit Measurements

Guy Ramon and Rob Cady, Physics

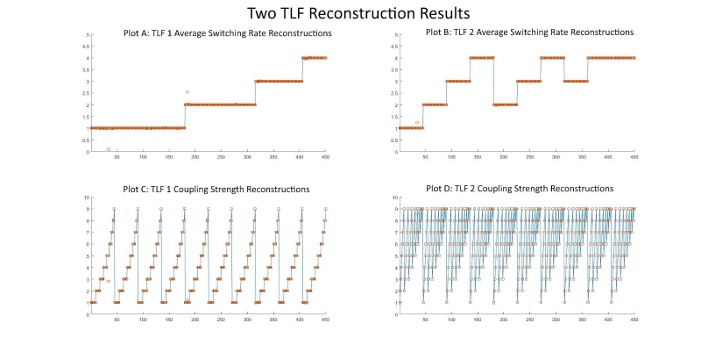

Qubits are the quantum computing version of classical bits, and they are notoriously sensitive to outside disturbances. In our research, a qubit’s signal attenuation is simulated in the presence of random telegraph noise, which is produced by one or more two-level fluctuators (TLFs). Qubit signal attenuation measurements are recorded after applying various control pulse sequences, and these data are then used by a minimization algorithm to reconstruct the TLF’s switching rate and coupling strength with the qubit. In order to simulate a more realistic noise environment, our code also accounts for the measurement errors that are inherent to any experimental apparatus. However, introducing a random element to our simulations necessitates averaging results over many trials, which makes the WAVE HPC’s parallel computing abilities indispensable for our work. Our procedure has successfully reconstructed scenarios involving both a single TLF and two independent TLFs operating simultaneously on the qubit, which suggests that our method could be used by quantum computing experimentalists to help diagnose noise sources in their own systems.

Results from a recent two TLF reconstruction test, blue lines represent the actual TLF parameters, orange squares represent our reconstructions. Plots A and C correspond to the first TLF’s characteristics and how the algorithm reconstructed them, and plots B and D do the same for the second TLF.

Deep learning approaches for Video Prediction

Nam Ling, Ying Liu, and Mareeta Mathai, Computer Science and Engineering

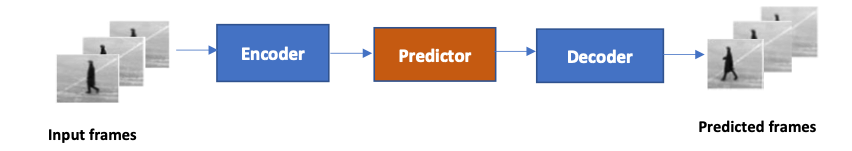

The task of video prediction is to generate unseen future video frames based on the past ones. It is an emerging, yet challenging task due to its inherent uncertainty and complex spatiotemporal dynamics. The ability to predict and anticipate future events from video prediction has applications in various prediction systems like self-driving cars, weather forecasting, traffic flow prediction, video compression etc. Due to the success of deep learning in the computer vision field, many deep learning AI architectures like convolutional neural networks(CNNs), long short-term memory (LSTMs), convolutional LSTMS (ConvLSTMs) have been explored to improve prediction accuracy. The internal representation, mainly the spatial correlations and temporal dynamics of the video, is learned and used to predict the next frames in deep learning-based video prediction.

In the light of Green AI which aims for efficient environment friendly solutions alongside accuracy, we concentrate on lightweight methods for video prediction. Such methods are suitable for memory-constrained and computation resource-limited platforms, such as mobile and embedded devices. Transformers are the latest innovative idea in the deep learning field. It has proved to be successful in the natural language processing (NLP) domain and is currently being explored in the computer vision field as well. It can efficiently capture long-term dependencies in video and has much faster training time due to its parallelizable mechanism, unlike LSTM-based models. We focus on transformer-based architecture incorporated with CNN/LSTM methods with fewer parameters for our lightweight video prediction technique. WAVE facilities have helped to develop deep learning algorithms and have enormously aided in accelerating our experiments.

Figure 1. The architecture of the proposed 3D prediction network. Three input frames at time steps t, t+1, t+2 are fed to the network and the network predicts frames at time steps t+1, t+2 and t+3.

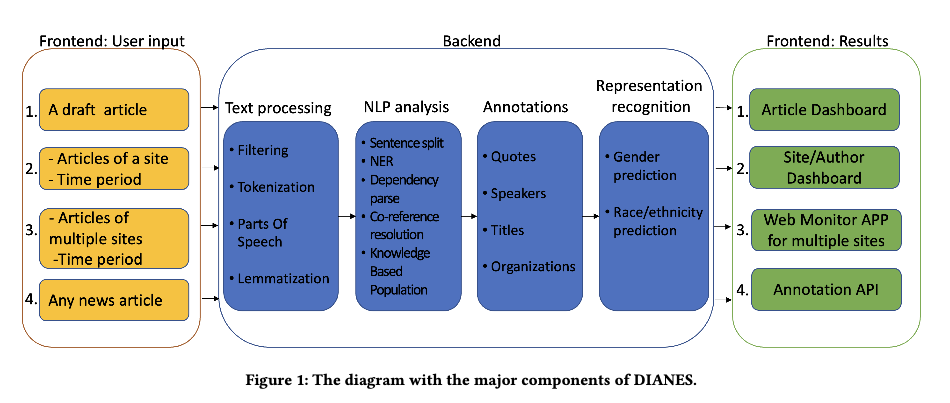

DIANES: A DEI Audit Toolkit for News Sources

Subramaniam Vincent, Markkula Center for Applied Ethics

Yi Fang, Computer Science and Engineering

Professional news media organizations have always touted the importance that they give to multiple perspectives. However, in practice, the traditional approach to all-sides has favored people in the dominant culture. Hence it has come under ethical critique under the new norms of diversity, equity, and inclusion (DEI). When DEI is applied to journalism, it goes beyond conventional notions of impartiality and bias and instead democratizes the journalistic practice of sourcing – who is quoted or interviewed, who is not, how often, from which demographic group, gender, and so forth.

The Markkula Center's Journalism and Media Ethics program in collaboration with the School of Engineering have built a series of back-end and front-end projects that allows the understanding of how different types of news sites show up on source diversity metrics. We have successfully re-purpose our extraction, parsing, pre-processing, and analysis pipelines on WAVE into the back-end of our DEI Audit prototype and toolkit project. This has created an opportunity for real-world American local newsrooms to examine and improve their everyday source diversity at very low or zero prototype costs.

A research paper describing the DAINES system has been accepted for publication at ACM SIGIR 2022.

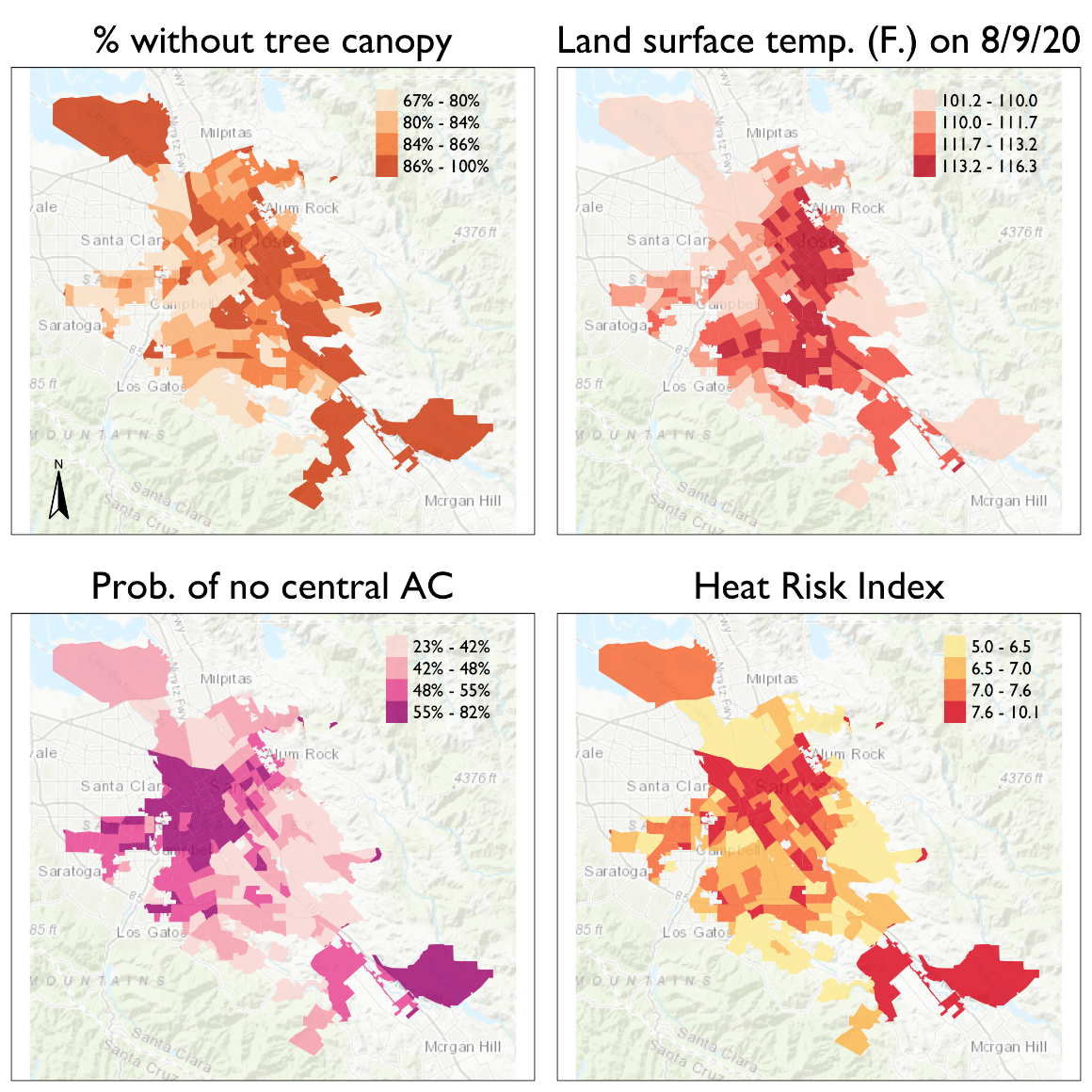

Housing and Urban Heat: Assessing Risk Disparities

C. J. Gabbe, Environmental Studies and Sciences

Heat is the leading weather-related cause of death in the United States, and housing characteristics affect heat-related mortality. Dr. C.J. Gabbe's research (in collaboration with Georgia Tech's Evan Mallen and SCU alumnus Alex Varni) answers two questions. First, how do heat risk measures vary by housing type and location in San José, California? Second, what housing and neighborhood factors are associated with greater heat risk? We answer these questions using a combination of descriptive statistics, exploratory mapping, and regression models. Our findings indicate that different housing types face varying degrees of heat risk and the largest disparities are between detached single-family (lowest heat risk) and multifamily rental (highest heat risk). Air conditioning availability is a major contributing factor, and there are also heat risk disparities for residents of neighborhoods with larger shares of Hispanic and Asian residents. This research demonstrates the need to understand heat risk at the parcel scale, and suggests to policymakers the importance of heat mitigation strategies that focus on multifamily rental housing and communities of color.

Molecular Modeling of Protein-DNA Interactions

Michelle McCully, Biology

Software: NAMD, VMD, ilmm; Hardware: GPU-parallelized and CPU-parallelized nodes

Protein modeling uses computational models to visualize protein structure and dynamics to predict and describe how they function. Proteins are large molecules made in all organisms and perform countless jobs in cells. The McCully Lab is particularly interested in the differences between the proteins that organisms make naturally and proteins that scientists design in the lab. They use a combination of experimental, computational, and theoretical techniques to investigate the relationships between these proteins’ shapes, movements, and functions. This work allows scientists to understand the mechanisms by which proteins maintain their functions in harsh environments, for example industrial settings and long-term storage. Undergraduate student researchers use the WAVE facilities to perform molecular dynamics simulations of protein-DNA complexes in order to predict how well they bind. In the image, the blue protein stays bound to DNA (gray) in their simulations, whereas the red one does not.

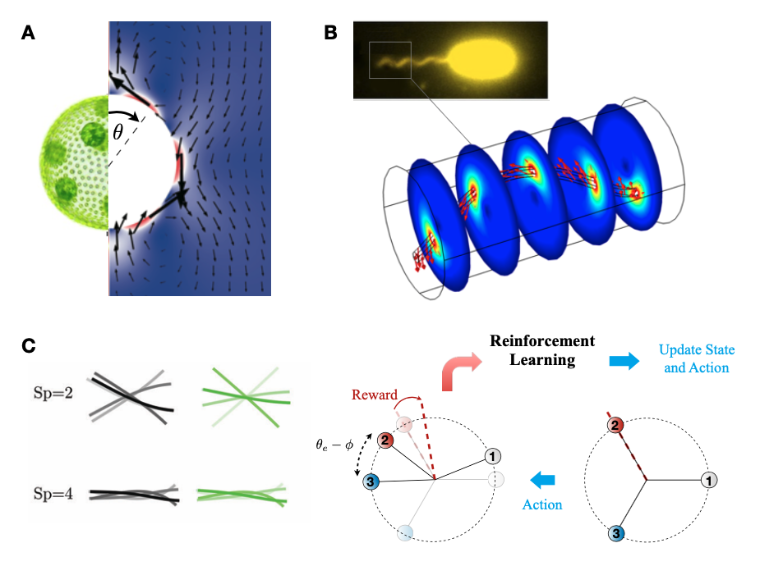

Theoretical and Computational Mechanics

On Shun Pak, Mechanical Engineering

Dr. On Shun Pak's research focuses on the fluid mechanics of biological and artificial microscopic swimmers. Locomotion of swimming microorganisms such as sperm cells and bacteria plays important roles in different biological processes, including reproduction and bacterial infection. Computational tools are used to better understand the physical mechanisms underlying their swimming motion (panel A and B). In addition, engineering principles and machine learning techniques are applied to design artificial microscopic swimmers (panel C) for potential biomedical applications such as drug delivery and microsurgery.

The biological images in panels A and B are adopted, respectively, from Pedley, IMA J. Appl. Math., 81:488–521 (2016) and Chen et al., eLife, 6:e22140 (2017).

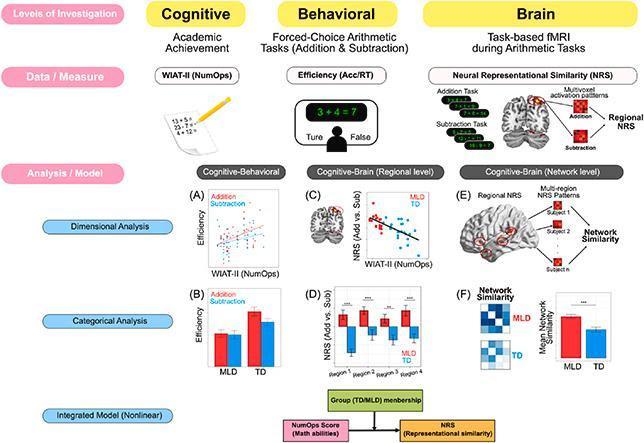

Cognitive and Computational Neuroscience

Lang Chen, Psychology & Neuroscience

Dr. Lang Chen's research focuses on employing a multi-level analytical framework for investigating individual differences in behavioral, cognitive and neural profiles of differentiation between distinct numerical operations in young children. The study revealed that children with lower math abilities showed undifferentiated performance on addition and subtraction tasks, and more interestingly, their neural activity patterns for these two operations were quite similar across a distributed brain network supporting quantity, phonological, attentional, semantic and control processes. These findings identify the lack of distinct neural representations as a novel neurobiological feature of individual differences in children's numerical problem-solving abilities and an early developmental biomarker of low math skills. These results have been published in several peer-reviewed articles including:

- Linear and nonlinear profiles of weak behavioral and neural differentiation between numerical operations in children with math learning difficulties, Lang Chen, Teresa Iuculano, Percy Mistry, Jonathan Nicholas, Yuan Zhang, Vinod Menon, Neuropsychologia, Volume 160, 2021

- Category-specific activations depend on imaging mode, task demand, and stimuli modality: An ALE meta-analysis, Kimberly D. Derderian, Xiaojue Zhou, Lang Chen, Neuropsychologia, Volume 161, 2021

Multi-level analytical framework for investigating individual differences in behavioral, cognitive and neural profiles of differentiation between distinct numerical operations.

Learned Image and Video Coding

Ying Liu, Computer Science and Engineering

Video cameras are proliferating at an astonishing rate in recent years. It is predicted that the number of cameras the world will see in 2030 is approximately 13 billion. The huge amount of visual data can be leveraged in a wide range of existing and future applications ranging from video streaming, mobile video sharing, surveillance camera and autonomous vehicles. Recent advances in deep learning have achieved great success in computer vision tasks. The Video and Image Processing (VIP) Lab focuses on deep learning-based image and video coding, video prediction and generation, as well as other visual recognition tasks. The tools used involve, but are not limited to: convolutional neural network (CNN), generative adversarial network (GAN), recurrent neural networks (RNN), and transformers.

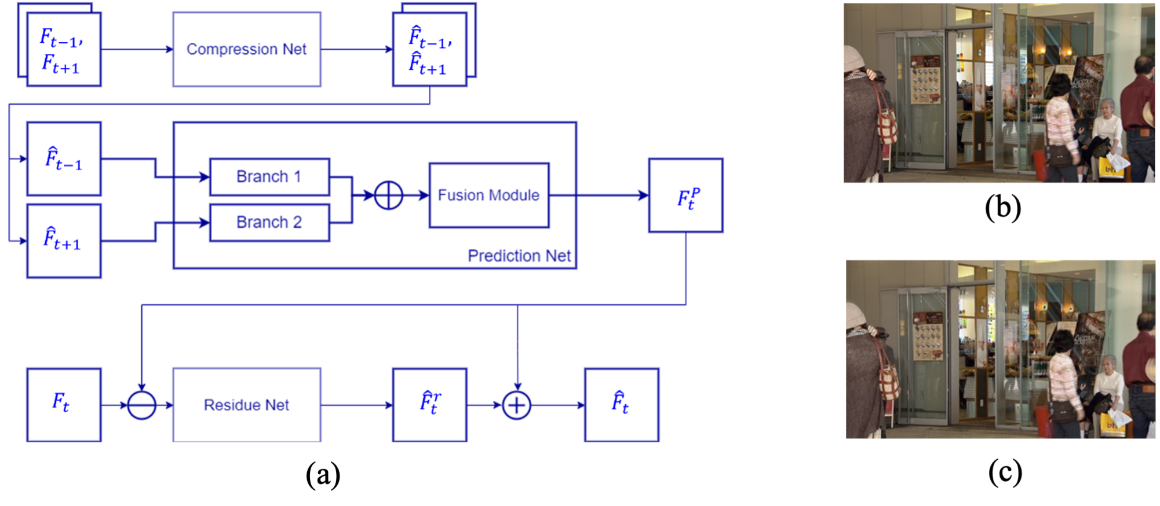

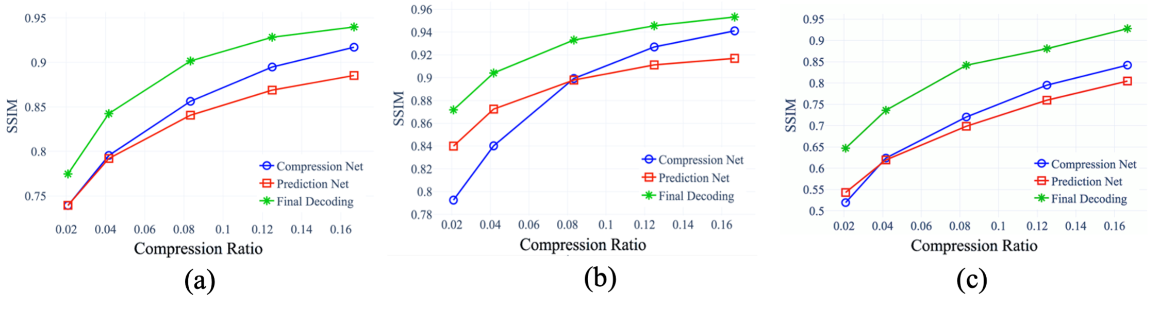

Figure 1. (a) The architecture of the proposed motion-aware deep video coding network; (b) ground-truth frame ; and (c) decoded frame.

Figure 2: The SSIM versus compression ratios for (a) the BQ Mall video sequence; (b) the Basketball Drill video sequence; and (c) the Party Scene video sequence.

Students in the VIP lab use the WAVE facilities to develop deep learning algorithms and train deep models on large-scale datasets. The VIP lab has successfully obtained fundings from the National Science Foundation, and the School of Engineering at Santa Clara University.

More details can be found at The Video and Image Processing (VIP) Lab.

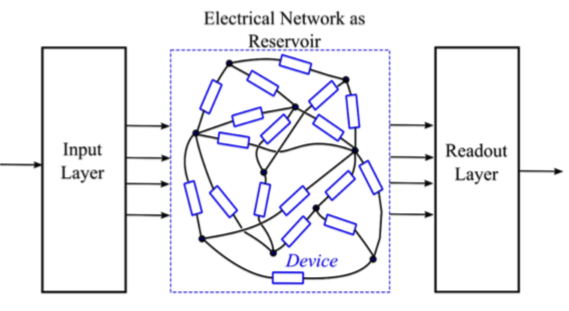

Reservoir Computing Architecture as Neurotrophic Computing

Dat Tran, Electrical and Computer Engineering

Reservoir Computing (RC) is an alternative to the traditional RNN for machine learning that avoids the training of large recurrent networks. In an RC architecture, inputs are mapped to the internal states of a reservoir and the outputs from the untrained reservoir provide input signals to a readout layer. The readout layer is trained with a simple algorithm to perform a particular task. The reservoirs are simulated with different devices using Modal Nodal Analysis (MNA) to build PSpice simulation platforms. The software is python-based with OrGanic Environment for Reservoir computing (OGER) as a Python toolbox for building, training, and evaluating modular learning architectures on large datasets.