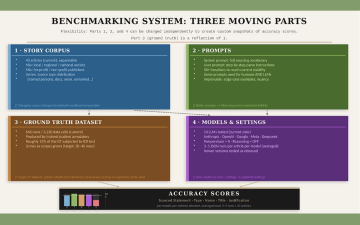

Benchmarking System Diagram

We benchmarked 13 leading LLMs—from Anthropic, OpenAI, Google, Meta, and Deepseek—on their ability to identify and categorize 646 source attributions from 43 professionally published news articles. Using a ground truth dataset built by trained student annotators, we measured accuracy across five sourcing elements: sourced statements, type of source, name of source, title, and source justification. Only two models reached an 80% accuracy threshold for identifying sourced statements. All models performed well on structured elements like source type, name, and title. Every model struggled with source justification annotation—the element we assert is critical to ethics-based applications—with the best score reaching only 56%. The complete dataset, prompts, and scoring code are publicly available.

Model Performance Summary

This table displays accuracy scores for each model across all annotation elements. Green highlighting (80%+) indicates strong performance, yellow (60-80%) shows moderate capability, and red (<60%) signals areas requiring improvement.

| Model | Sourced Statement | Type of Source | Name of Source | Title of Source |

Source Justification

|

| Gemini 2.5 Pro | 83.66% | 88.60% | 79.94% | 79.81% | 44.79% |

|---|---|---|---|---|---|

| Claude 3.7 Sonnet-Thinking | 80.48% | 90.01% | 84.79% | 84.57% | 49.00% |

| Claude 3.7 Sonnet | 80.01% | 88.25% | 82.67% | 82.61% | 51.95% |

| GPT 4.1 | 78.49% | 91.07% | 86.61% | 85.61% | 38.91% |

| Claude 4 Sonnet | 76.76% | 91.43% | 85.47% | 86.03% | 56.17% |

| Gemini 1.5 Pro | 76.25% | 91.89% | 89.81% | 89.48% | 49.79% |

| GPT 5 | 73.72% | 90.49% | 85.25% | 86.66% | 41.46% |

| Deepseek Chat-V3-0324 | 73.37% | 88.83% | 84.83% | 85.24% | 43.99% |

| ChatGPT 4o-latest | 72.72% | 93.45% | 87.69% | 87.53% | 41.56% |

| Deepseek R1-0528 | 67.46% | 92.95% | 84.81% | 83.97% | 40.09% |

| Llama 4 Maverick | 66.59% | 90.59% | 86.16% | 86.09% | 27.86% |

| Llama 3.1-405B | 64.90% | 90.55% | 85.32% | 85.26% | 37.35% |

| Claude 3.5 Sonnet | 61.82% | 92.49% | 87.93% | 87.34% | 49.33% |

What you need to know

- All 13 models performed well (80%+ accuracy) on detecting the structured elements—source type (88–93%), name of the source (80–90%), and title (80–89%). Title-based source analysis of news articles is an easier starting point for applications.

- Only 2 of 13 models meet a practical accuracy bar (80%) for sourced attributions enumeration in news stories. Gemini 2.5 Pro (83.66%) and Claude 3.7 Sonnet (80.01%). This is a foundational task all other sourcing analysis depends on.

- Source justification remains out of reach for every model. The highest score for identifying why sources are included—the annotation element critical to ethics-based news applications and source diversity audits—was 56%.

- Analysis applications on structured sourcing elements are feasible. All models reliably extract source type, name, and title (80–93% accuracy), enabling applications such as expert-vs-non-expert source proportions, organizational representation analysis, and source authority patterns. For e.g. news reporters can use the prompts and a high-scoring model to generate basic sourcing audit files of their own stories.

- The dataset and code are fully open. 646 ground truth annotations across 43 news articles, all LLM outputs, prompts, and scoring code are publicly available on Hugging Face and GitHub.

Access the Dataset

The complete benchmark dataset is publicly available on Hugging Face. The project code (annotation extraction) and dataset is available on GitHub. Everything needed to reproduce, extend, or build on this benchmark is included.

Dataset License: Apache 2.0

Folder Structure

- news_articles_corpus/ — 43 news article text files, one per story.

- students_annotations_GTs/ — 646 rows of ground truth sourcing annotations (CSV), plus inter-coder reliability scoring code and data.

- Llm_generated_annotations/ — LLM-generated annotation files for all 13 models, 3–5 iterations per article per model.

Prompts folder also included: system prompt (with full sourcing vocabulary definitions) and user prompt (annotation instructions).

File Naming Convention

Articles are numbered 1–43. Every file uses a matching number prefix so article texts, ground truth CSVs, and LLM output CSVs can be cross-referenced directly.

Article text: story-11-Restoring_rights_felons.txt

Ground truth CSV: GT-11Nebraskafelonscrimjusticerev2.csv

LLM annotation CSV: 11Restoring_rights_felonsclaude3_7sonnetv2Sep06.csv

LLM annotation filenames also encode the model name and iteration version, enabling direct comparison across models for the same article.

Example Articles

Four articles used as annotation examples throughout the report:

Article 11: Restoring voting rights for former felons (Nebraska criminal justice reporting)

Article 25: Ballot access for transgender voters (anti-trans laws coverage)

Article 31: Vermont hair discrimination bill (state legislature reporting)

Article 32: OpenAI board restructuring (tech governance reporting)

What Each Annotated CSV Contains

Each row in a ground truth or LLM annotation CSV represents one sourced statement from the article and contains five columns:

Sourced Statement — The verbatim or paraphrased text attributed to a source in the story.

Type of Source — Category: Named Person, Named Organization, Document, Anonymous Source, Unnamed Person, or Unnamed Group of People.

Name of Source — Full name of the person or organization (where applicable).

Title of Source — Formal position, role, or expertise held by the source.

Source Justification — The reporter’s explanation of why this source is included—their connection to the story, lived experience, stakeholder status, or witnessed events.