Harvey Chilcott '23 was a 2022-23 Hackworth Fellow with the Markkula Center for Applied Ethics, and recently graduated from SCU with a biology major and minors in philosophy and biotechnology. Views are his own.

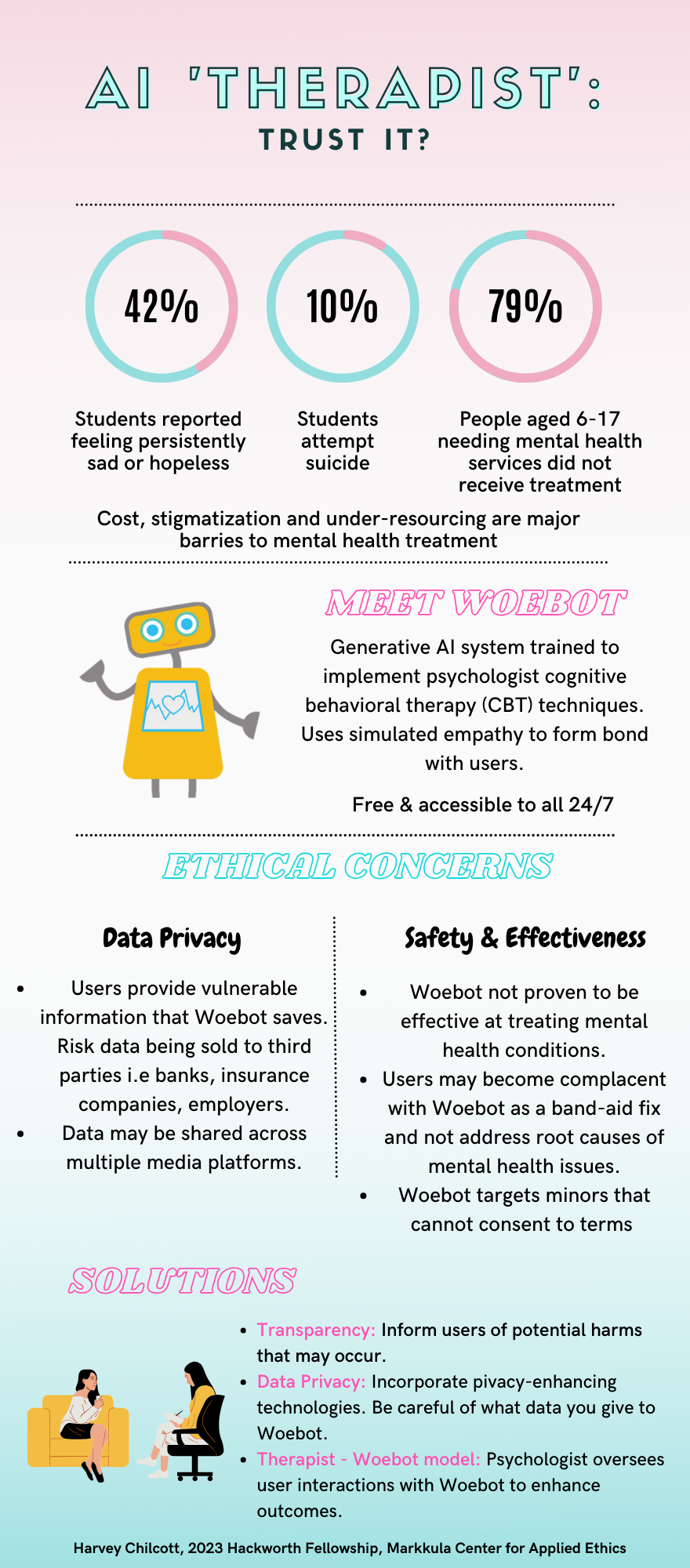

AI ‘Therapist’: Trust It?

Woebot has potential to alleviate depression and anxiety, which affects a significant portion of society. However, Woebot possesses harms that lack transparency. Harms experienced by users, especially minors, are ignored because of the urgency to reduce depression and anxiety. This article exposes harm Woebot presents and offers a model Woebot could incorporate to enhance user outcomes.

Adolescents are particularly vulnerable to mental illness. According to the CDC, 42% of US high school students reported feeling persistently sad or hopeless. Mental health deterioration has led to 1 in 5 US high school students seriously considering suicide and 10% attempting suicide. Mental health outcomes continue to decline for adolescents. 79% of US children aged 6-17 that needed mental health services did not receive mental health treatment. Stigmatization, cost and lack of resources are key factors leading to unmet adolescent mental health needs.

The overwhelming need led to developing Woebot, a generative AI system trained to implement psychologist cognitive behavioral therapy (CBT) techniques. Woebot found 22% of US adults have used mental health chat bots and half would consistently utilize one if their mental health was poor.

Woebot demonstrates potential to be a cost-effective and accessible instrument to mitigate dire unmet need for mental health resources. However, AI mental health chatbots pose serious ethical concerns. These ethical concerns are analyzed by evaluating Woebot to The White House’s recently released AI Bill of Rights, which is a blueprint for ethical AI. Clear ethical evaluation of Woebot can be achieved by 1) stating what the AI Bill of Rights proposes ethical AI looks like, 2) whether Woebot meets these ideals and 3) how to incorporate mitigation strategies. This paper focuses on safety and effectiveness criteria.

Safety and effectiveness of AI is important because it ensures users avoid harms. The White House’s AI Bill of Rights states that a) AI meets safety and effective standards when it completes its intended function, b) is designed to proactively protect users from unintended reasonably foreseeable harms, and c) is transparent with users on potential harms and lack of effectiveness, thus improving user benefits.

Woebot’s purported benefit is supported by a clinical trial conducted on 70 participants aged 18-28 at Stanford University over 2 weeks that suggested Woebot led to reductions in depression and anxiety compared to reading the National Institute of Mental Health ebook.

Although the results present promising outcomes, they are far from proving Woebot is effective. A study on 70 participants over 2 weeks is insufficient to draw conclusions to the entire population, and an ebook control is not as satisfactory as comparing it to the psychologist standard of care. Ideally, there should be three experimental groups: 1) psychologist only, 2) AI only, and 3) psychologist aided with AI.

Although Woebot may have acute benefits, Woebot may enhance chronic depression and anxiety. This may lead users to false complacency that their issues are being treated, when in reality the root cause is not addressed.

Woebot forms bonds with users comparable to human therapists with patients within 3-5 days. Instead of a lonely person seeking human connection, they resort to Woebot for instant connection, which further prevents patients from forming human relationships. This band-aid fix may generate complacency, leading users to not seek human connections, further harming users’ long-term mental health. Users may also utilize Woebot but find it ineffective, leading them to believe that their mental health issues cannot be treated, even by professionals.

Woebot advertises their services to minors aged 13 and above, which is below the age of consent. Despite Woebot targeting services towards minors, they sidestep responsibilities, stating in their legal terms that “parents and legal guardians are responsible for the acts of their minor children when using the Services, whether or not the parent or guardian has authorized such uses.”

This policy is concerning because minors can be harmed when utilizing Woebot and the legal disclaimer is not reasonable to adhere to. These safety and effectiveness points fail to meet The Whithouse’s AI Bill of Rights because Woebot doesn’t guarantee its function of improving mental health is met. The consequences are that worse long-term mental health occurs. Woebot also lacks transparency on potential harms that may exist which inhibits users’ ability to make safe informed decisions.

Solutions are to conduct further research and clinical trials of both short-term and long-term effects of using Woebot. Human oversight of AI is incredibly important, especially in high stakes situations, to mitigate risk.

A proposed model where psychologists and other mental health professionals receive summaries of user conversations with Woebot may significantly improve mental health outcomes because it ensures a trained therapist can intervene if a users’ safety were in jeopardy. Furthermore, therapists can adequately treat patients’ mental health conditions, which Woebot has not been proven to satisfy.

This model increases effectiveness of Woebot’s function to an individual’s mental health issues, improves population mental health outcomes by freeing psychologists’ time to treat more patients and is less expensive for patients due to less psychologist contact time. Clinical studies using a paired psychologist and mental health AI chatbot therapy will have to be conducted to prove effectiveness.

At bare minimum, Woebot needs further transparency with users regarding safety and effectiveness, notifying users of reasonably foreseen harms such as complacency, minor consent and breaches in data privacy. Woebot should also constantly encourage users to seek professional help and reminders that Woebot is a tool, not a therapist.

Woebot image courtesy of Woebot Health.

Woebot image courtesy of Woebot Health.