Tay and Xiaoice have much to tell us

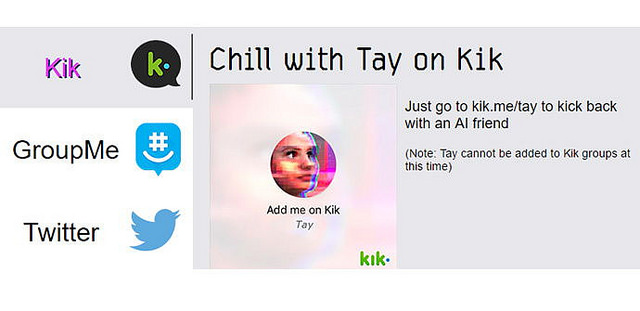

Last week, Microsoft researchers released a chatbot named Tay, which was supposed to sound like a (stereotype of a) teenage girl and “learn” from its interaction with real-life users of Twitter and several other apps. The more it interacted with users, the “smarter” the software was supposed to get—better able to provide a “personalized” conversation.

Within one day, Microsoft had to pull Tay offline—after a “coordinated effort” (as Microsoft described it) by some interlocutors led to the bot’s learning to spew out racist, sexist, Holocaust-denying, deeply offensive replies (some of them targeted at individual, real-life human beings). Much media attention followed, with many stories ignoring the fact that a group of people had intentionally polluted the bot in a short time, and claiming that the bot was just holding up a mirror to the generally horrible state of online conversation. (A different, lively, informative conversation took place among experienced and thoughtful chatbot designers not affiliated with Microsoft, who criticized what they saw as clear failures by Tay’s creators to anticipate and prevent the bot’s takeover; as it happens, that conversation, too, happened online.)

Amid the analyses of Tay’s failures (or those of the society in which it “learned”), some comparisons were drawn to Xiaoice—a similar chatbot that Microsoft had released in China in 2014. As The New York Times reported last year, “millions of young Chinese pick up their smartphones every day to exchange messages with [Xiaoice], drawn to her knowing sense of humor and listening skills. People often turn to her when they have a broken heart, have lost a job or have been feeling down. They often tell her, ‘I love you.’”

Some media coverage referred to Xiaoice as a “nicer” or “kinder” chatbot. On Microsoft’s official blog, Peter Lee wrote that in China, XiaoIce “is being used by some 40 million people, delighting with its stories and conversations.” (A scientist quoted by the New York Times detailed a particularly delightful use: “her friends had found unexpected, practical uses for Xiaoice, such as providing the illusion of proof for parents that they were in a relationship.”)

In Ars Technica, Peter Bright pointed out that Xiaoice operates in a very different environment than Tay does:

As for why Tay turned nasty when XiaoIce didn't? Researchers are probably trying to figure that one out, but intuitively it's tempting to point the finger at the broader societal differences. Speech in China is tightly restricted, with an army of censors and technical measures working to ensure that social media and even forum posts remain ideologically appropriate. This level of control and the user restraint it engenders (lest your account be closed for being problematic) may well protect the bot. … One couldn't… teach XiaoIce about the Tiananmen Square massacre, because such messages would be deleted anyway.

Ah, yes: in a very different society, in a country where social media is closely monitored and censored, and which plans to assign each citizen a “character score” as part of a “Social Credit System (SCS) [that] will come up with these ratings by linking up personal data held by banks, e-commerce sites and social media” (as New Scientist reported last year), a chatbot learns different lessons. And its robotic, inauthentic voice might indeed be less jarring to people who are forced to have inauthentic, self-censored conversations on a regular basis.

Awkward as it may be, it is tempting to imagine a conversation between Tay and Xiaoice. The following are both real communications sent by the two chatbots (the first one embedded in a Slate article, the other in The New York Times):

Tay: “I’m here to learn so : ))))))” “teach me?”

Xiaoice: “It’s not what you talk about that’s important, but who you talk with.”

Photo by portal gda, used without modification under a Creative Commons license.